Enhancing Efficiency with AI-Powered Conversational Search and Summarization

Role: Product & Design Lead (Discovery & Strategy)

Scope: AI-powered conversational search and summarization for Hayes (healthcare and clinical insights)

Timeline: 3 months

Impact: Reduced manual effort, improved information retrieval speed, and established an AI foundation across products

Overview

Healthcare professionals at Hayes spend significant time searching, validating, and summarizing clinical study findings, policy and appeals data. I led the exploration and definition of an AI-powered solution to streamline this process—balancing speed to market with long-term scalability.

The Situation

Hayes users—policy writers, clinicians, and appeal specialists—operate in a high-stakes environment where accuracy and speed are critical.

Their workflows required:

Searching across multiple documents

Manually extracting relevant information

Synthesizing findings into summaries

This created friction at every step:

Slow turnaround times

High cognitive load

Increased risk of errors

The existing search experience wasn’t built for how users actually worked.

The Problem

The core issue wasn’t just “search”—it was decision-making inefficiency.

1. Fragmented Information Retrieval

Users had to manually scan multiple documents to find relevant answers.

2. High Effort Summarization

Even after finding information, users still had to interpret and condense it themselves.

3. Accuracy & Trust Risks

Manual workflows increased the likelihood of missed or misinterpreted details.

4. Lack of Workflow Integration

Search results didn’t align with how users actually completed tasks like:

Policy updates

Appeals review

Coverage verification

Why This Was Hard

High-stakes domain (healthcare decisions require precision)

Low trust in AI outputs without transparency

Cross-functional complexity (design, engineering, research, GTM)

Ambiguous starting point (no clear “right” AI pattern yet)

We weren’t just designing a feature—we were defining how AI should behave within the product.

Strategy

We aligned around a clear hypothesis:

If we provide AI-generated answers with clear sourcing, users can complete complex workflows faster, with less effort and greater confidence.

This led to three priorities:

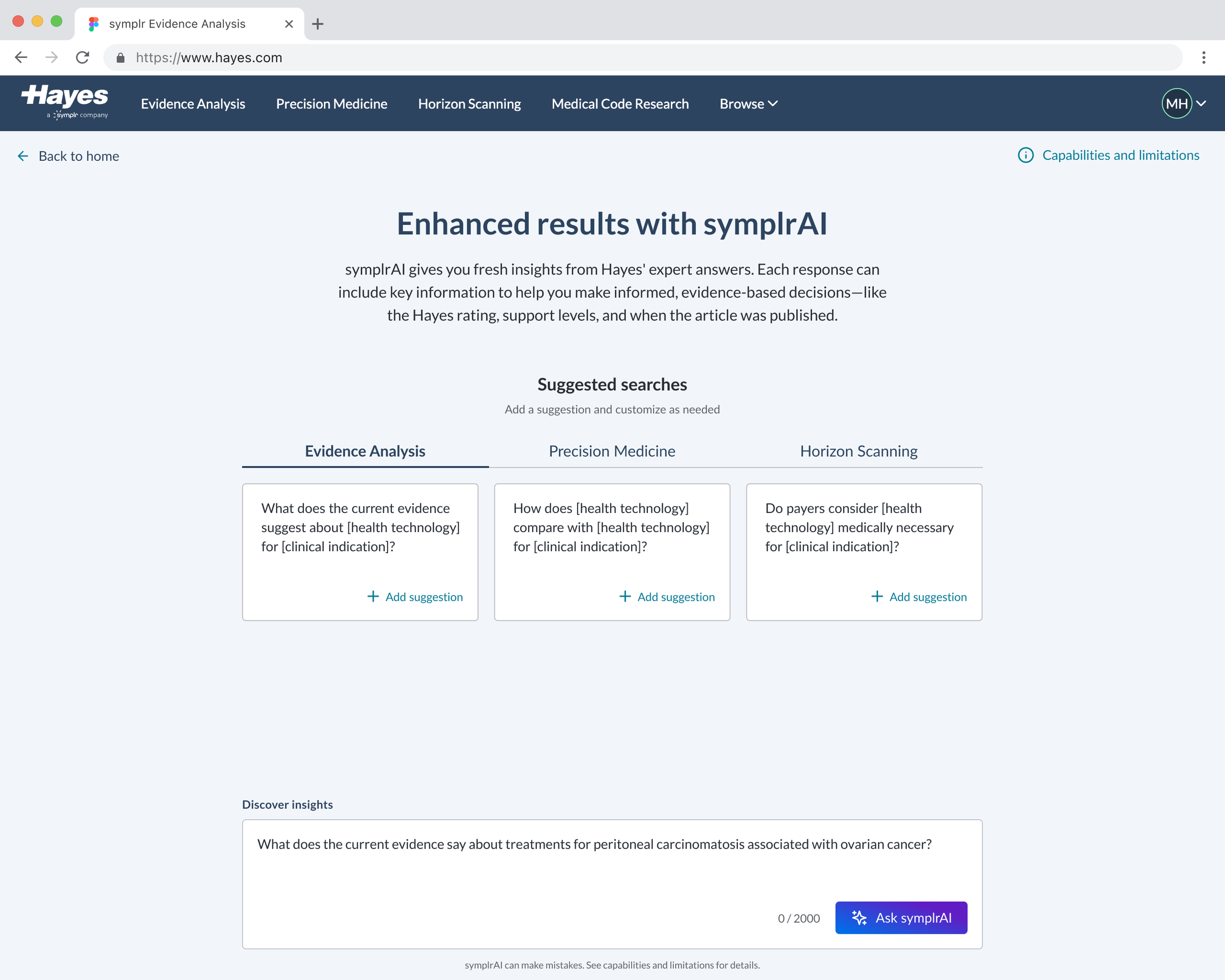

Start narrow with high-value use cases

Prioritize trust and transparency

Ship quickly to validate adoption

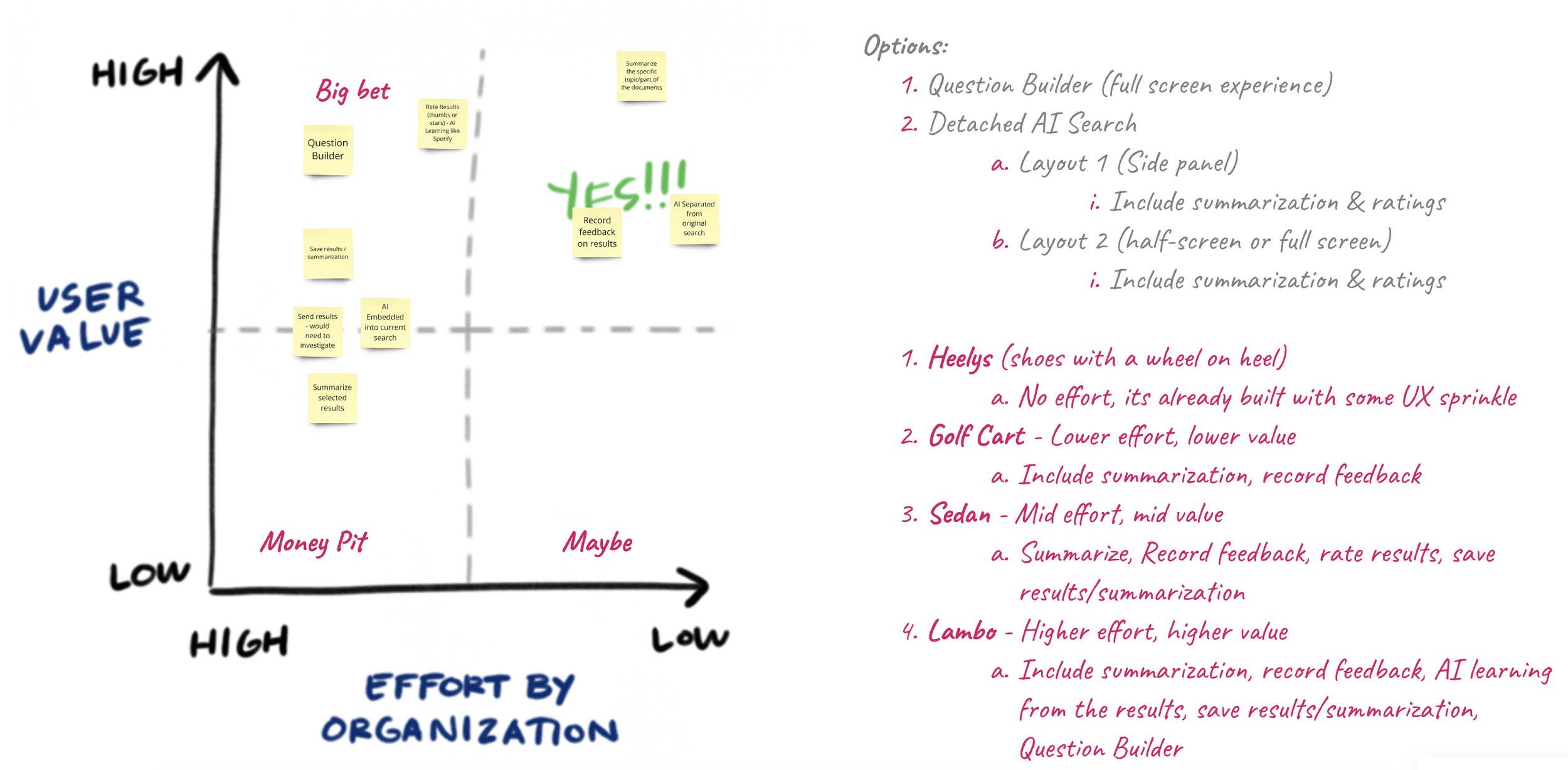

Key Decisions

1. Start with a Focused MVP (Not a Full AI Assistant)

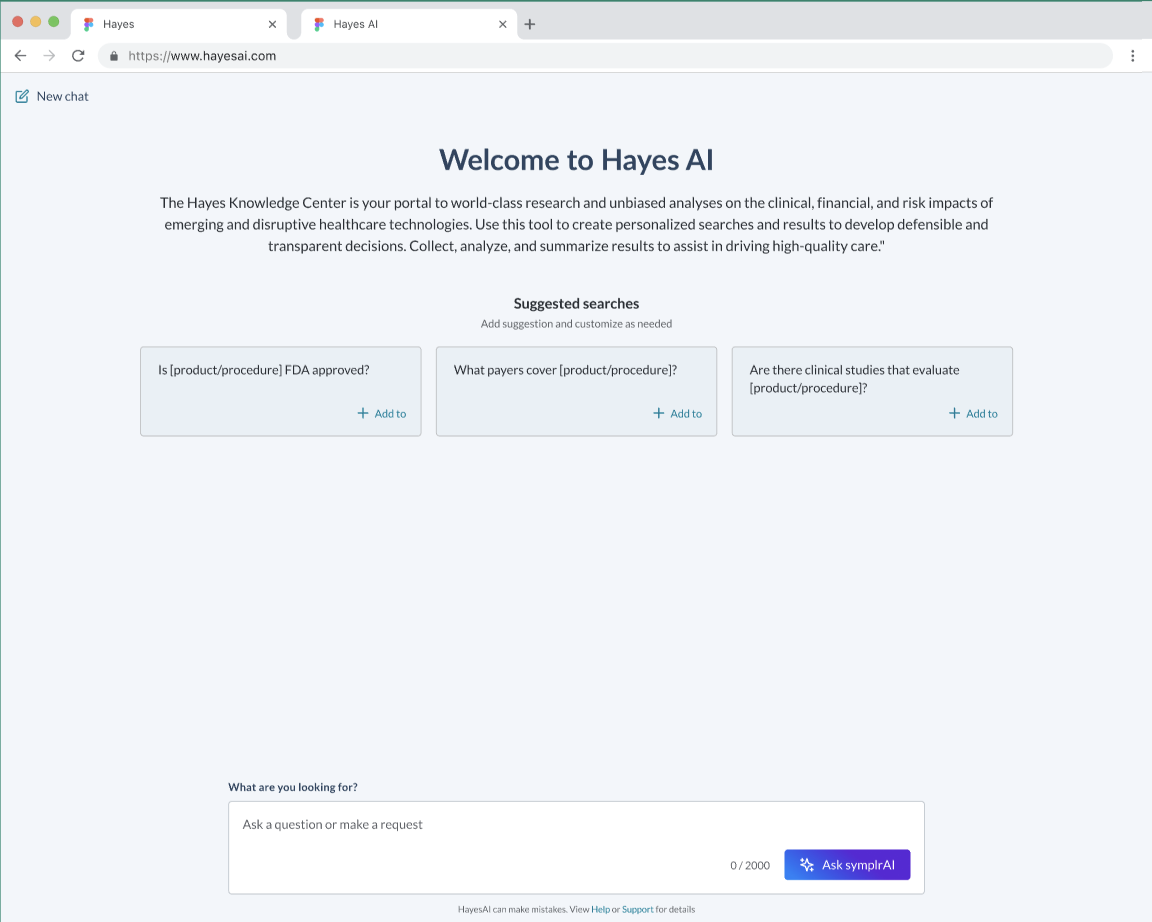

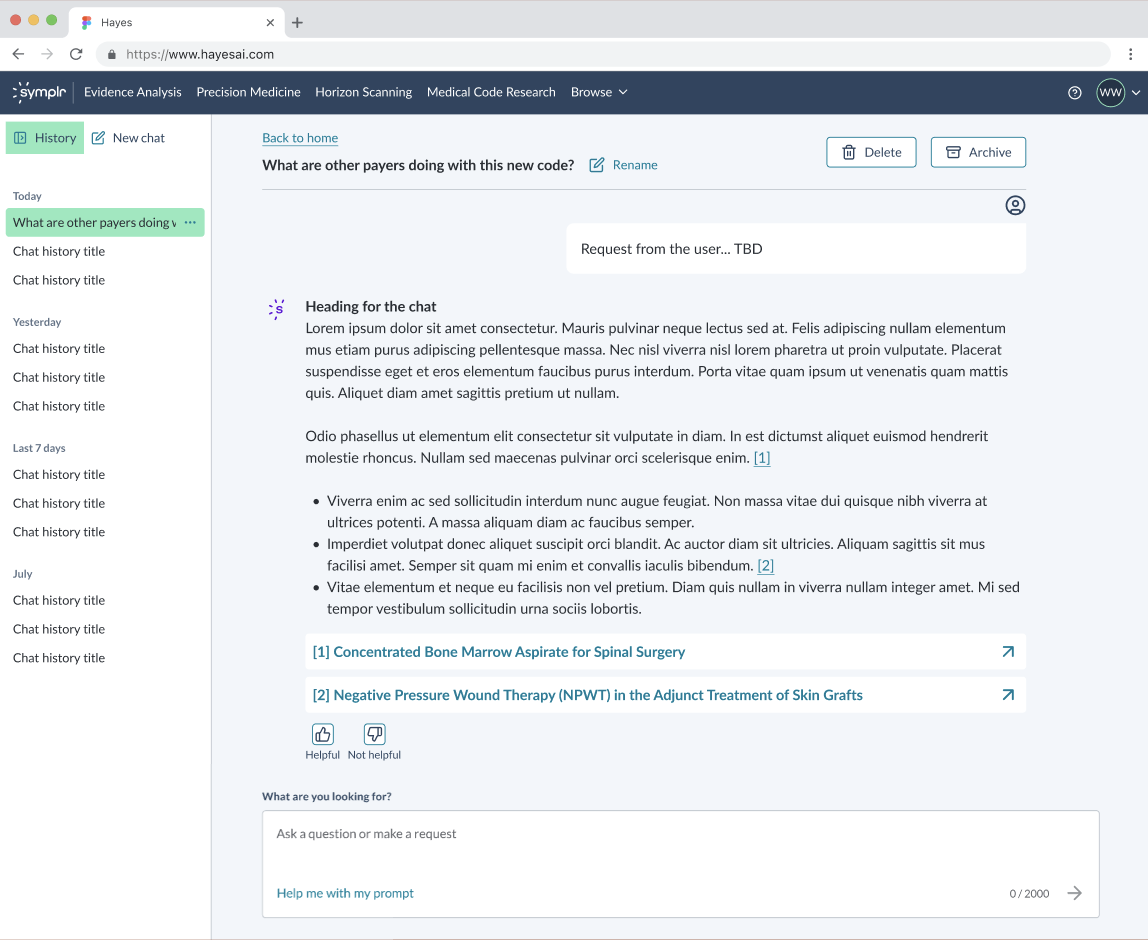

We scoped Phase 1 to summarization and conversational search within a contained experience.

Why: We needed to validate usefulness before expanding scope

Tradeoff: Limited functionality, but faster learning

2. Separate AI from Core Search (Initially)

We introduced AI in a dedicated surface instead of embedding it directly into existing workflows.

Why: Reduced risk and allowed controlled testing

Tradeoff: Added friction vs. fully integrated experience

3. Design for Iteration, Not Perfection

We structured the roadmap into progressive milestones:

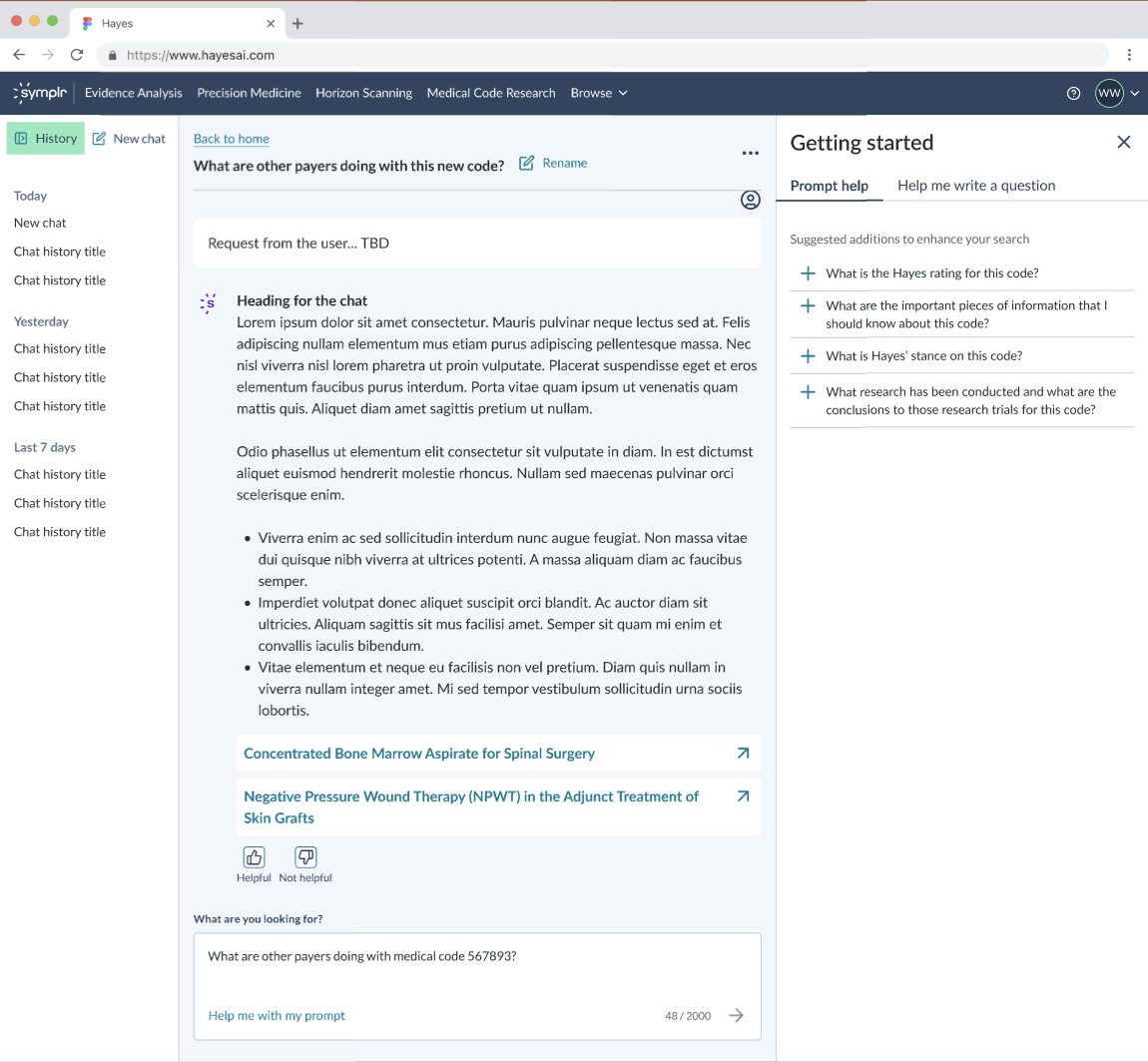

Phase 1 (“Roller skates”) → Core summarization + feedback

Phase 2 (“Electric scooter”) → Ratings, saved results, history

Phase 3 (“Sports car”) → Prompt guidance + deeper interaction

Why: AI quality and trust improve through iteration

Tradeoff: Early versions feel incomplete but enable faster validation

4. Prioritize Trust Signals Early

We emphasized:

Source visibility

Feedback mechanisms

Why: Adoption depends on user confidence in AI outputs

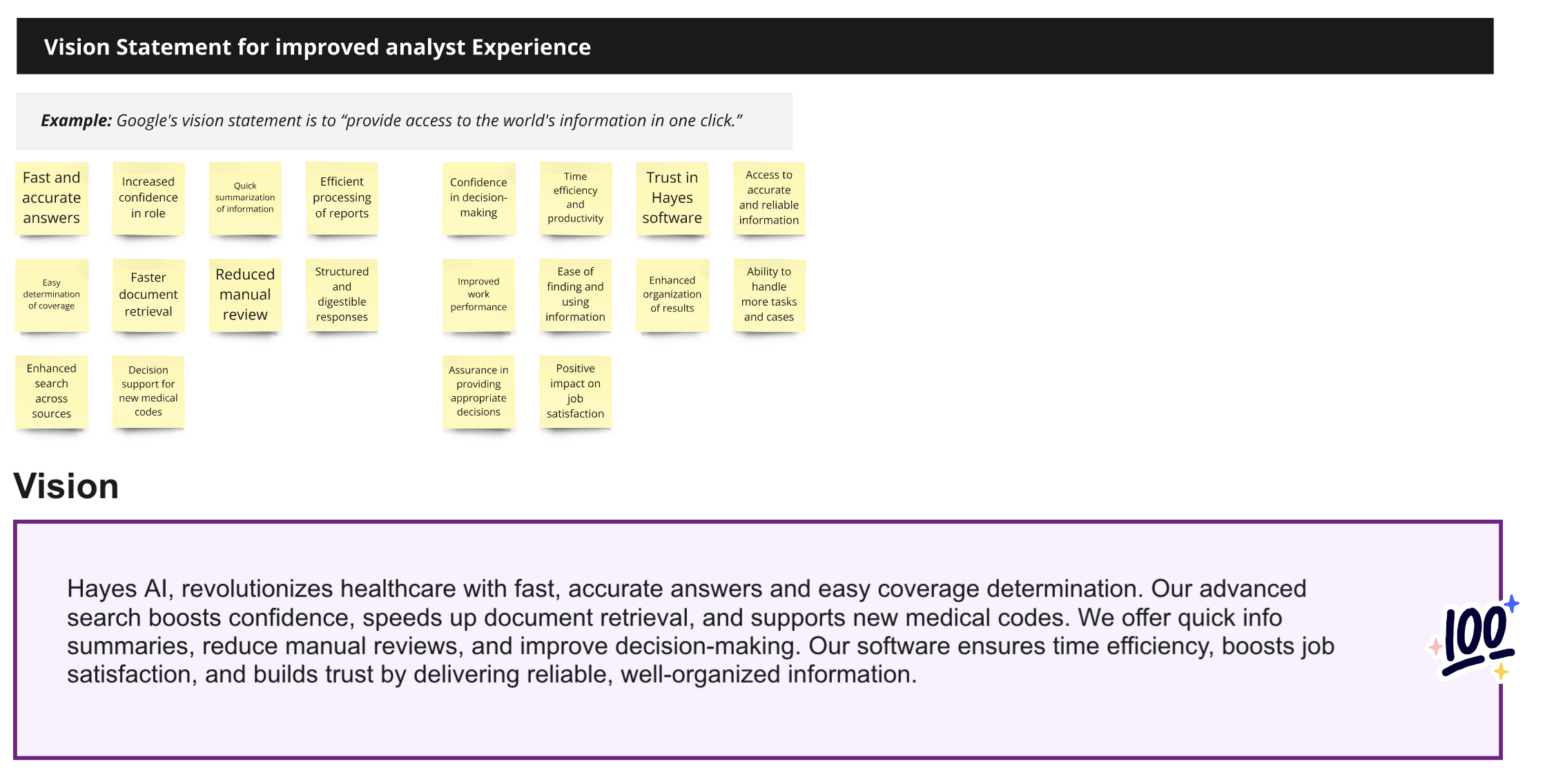

How I Led the Work

I led the initiative from discovery through execution strategy:

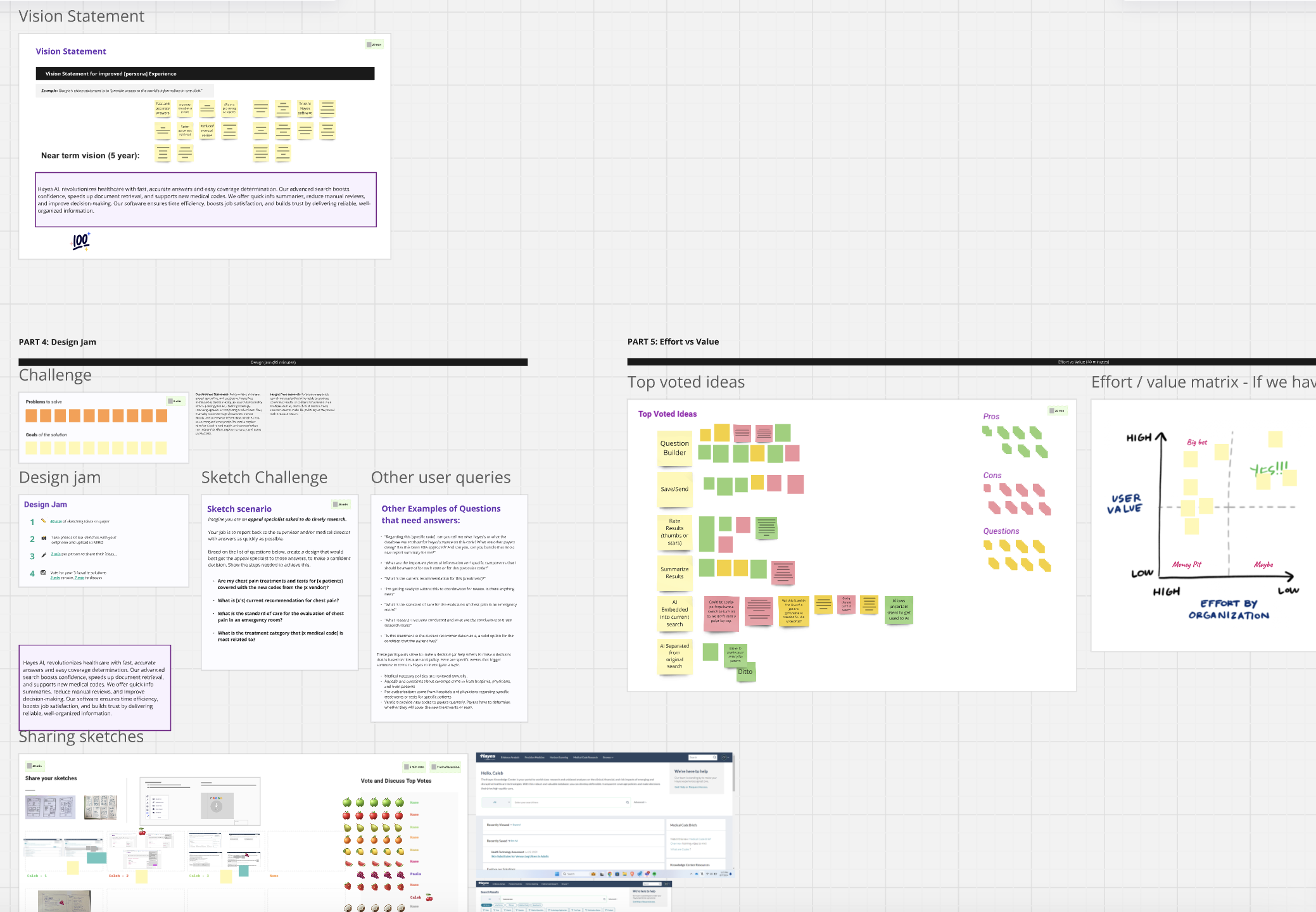

Discovery & Alignment

Facilitated cross-functional workshops (design, product, engineering, research, GTM)

Synthesized user pain points into clear opportunity areas

Defined the initial product vision

Vision + Strategy

Translated ideas into a phased execution plan

Scoped MVP to balance speed and value

Defined milestones aligned to learning goals

Execution Support

Partnered with designer to shape the user experience

Ensured alignment across teams as we moved toward delivery

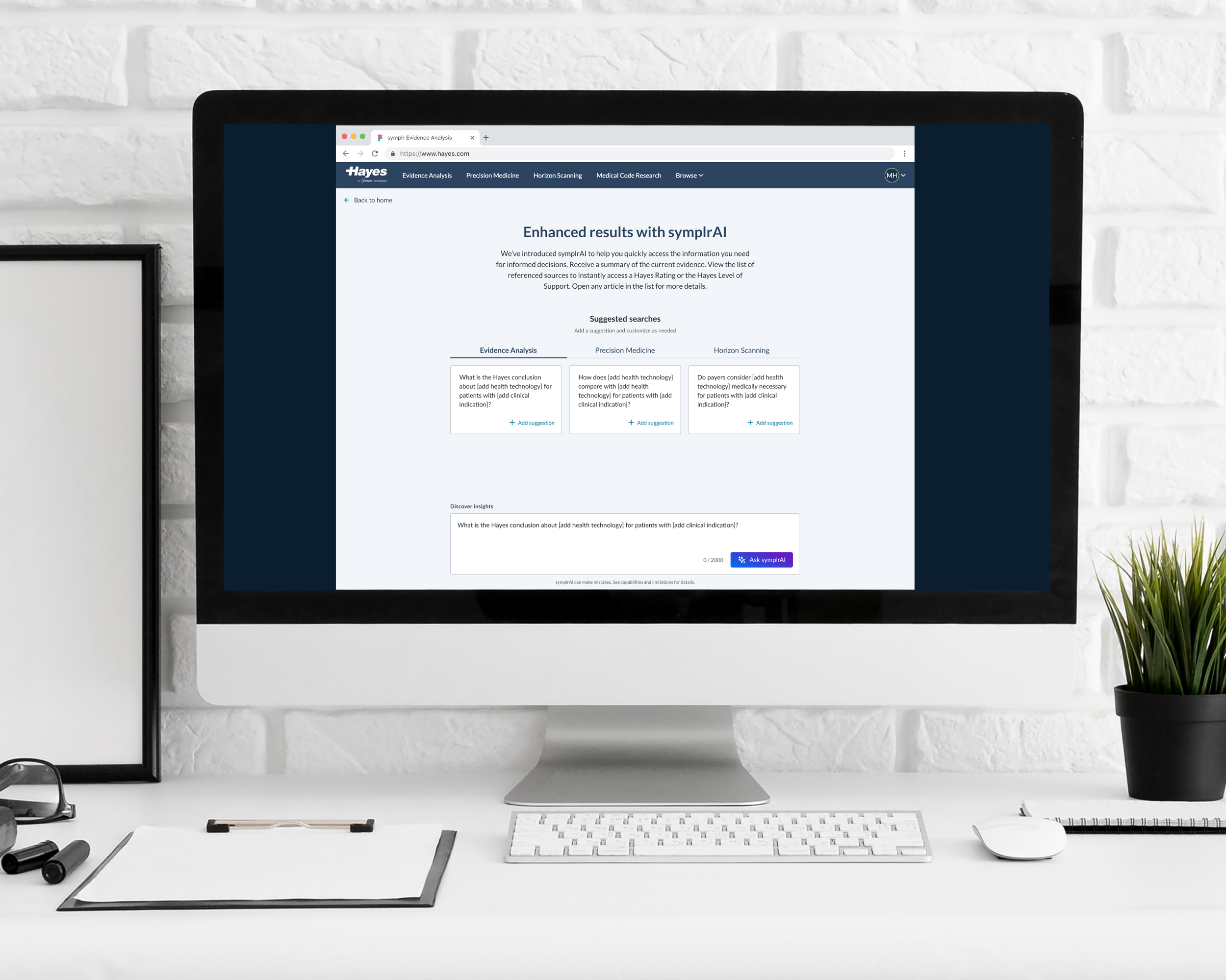

Solution

Phase 1 delivered:

AI-powered summarization

Conversational search interface

Feedback capture for continuous improvement

The experience allowed users to:

Ask questions directly

Receive synthesized answers

Reference supporting sources

Watch it work!

Click the video above to watch me interact with our shipped AI Phase 1 solution.

Early Results & Learnings

What worked:

Users saw immediate value in reduced manual effort

Summarization accelerated information processing

Where we saw friction:

Users were hesitant to fully trust AI outputs

Citations lacked precision (needed section-level linking)

Users expected AI answers at the top of results (Google-like behavior)

These insights directly informed Phase 2 priorities.

What This Enabled

Established an AI interaction pattern across the product

Created a foundation for scalable AI capabilities

Enabled faster iteration based on real user feedback

What This Demonstrates

Leading 0→1 product exploration in ambiguous spaces

Translating AI potential into practical, testable solutions

Balancing speed, scope, and user trust

Driving alignment across cross-functional teams

Designing for learning and iteration, not just delivery

Final Takeaway

This work wasn’t just about adding AI—it was about redefining how users access and act on information.

By starting small, prioritizing trust, and iterating quickly, we turned a complex, manual workflow into a foundation for faster, more intelligent decision-making.